3D Semantic MapNet: Building Maps for Multi-Object Re-Identification in 3D

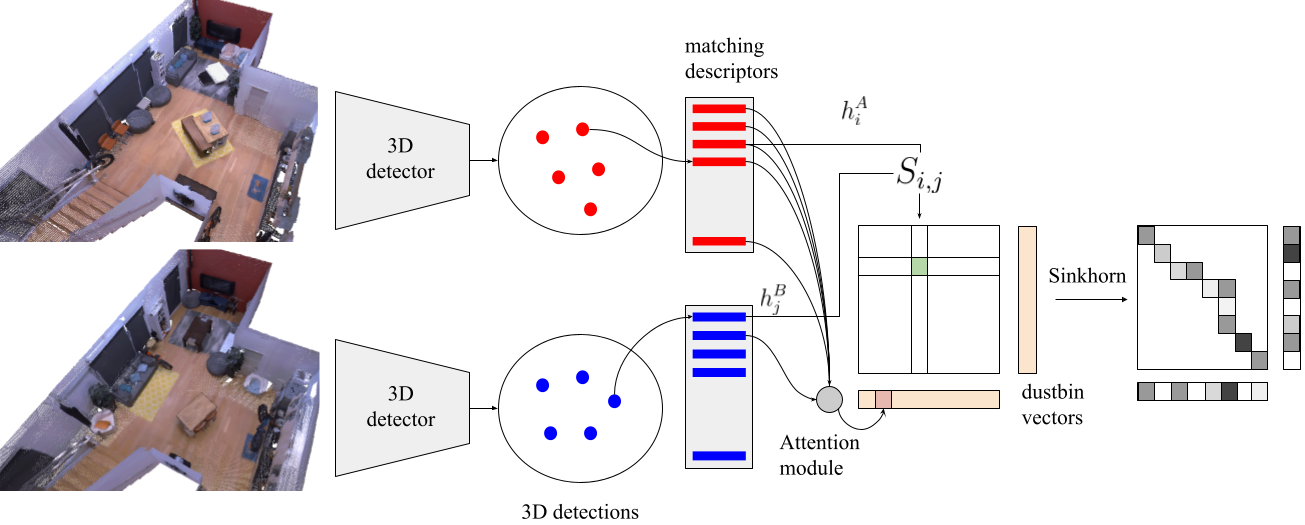

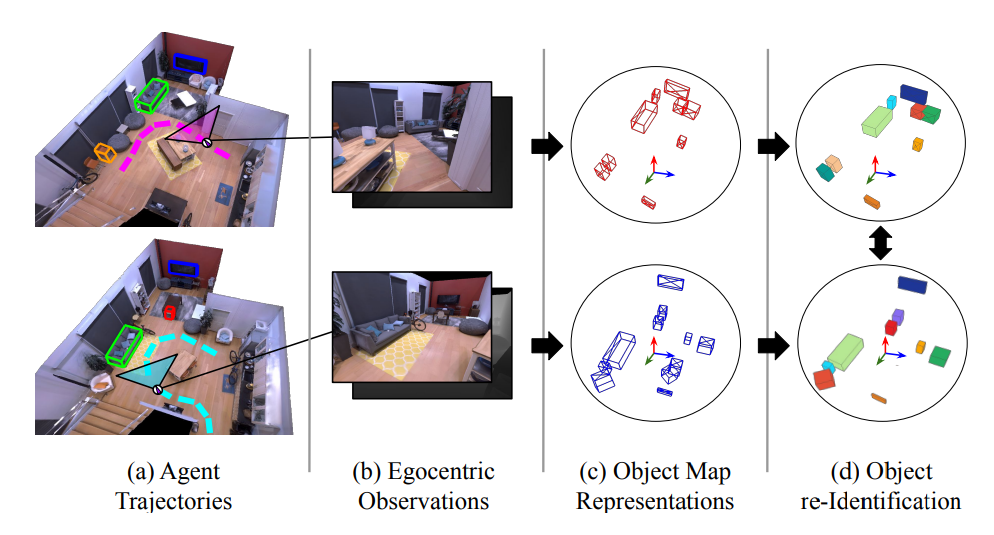

We study the task of 3D multi-object re-identification from embodied tours. Specifically, an agent is given two tours of an environment (eg an apartment) under two different layouts (eg arrangements of furniture). Its task is to detect and re-identify objects in 3D -- eg a sofa moved from location A to B, a new chair in the second layout at location C, or a lamp from location D in the first layout missing in the second. To support this task, we create an automated infrastructure to generate paired egocentric tours of initial/modified layouts in the Habitat simulator using Matterport3D scenes, YCB and Google-scanned objects. We present 3D Semantic MapNet (3D-SMNet) -- a two-stage re-identification model consisting of (1) a 3D object detector that operates on RGB-D videos with known pose, and (2) a differentiable object matching module that solves correspondence estimation between two sets of 3D bounding boxes. Overall, 3D-SMNet builds object-based maps of each layout and then uses a differentiable matcher to re-identify objects across the tours. After training 3D-SMNet on our generated episodes, we demonstrate zero-shot transfer to real-world rearrangement scenarios by instantiating our task in Replica, Active Vision, and RIO environments depicting rearrangements. On all datasets, we find 3D-SMNet outperforms competitive baselines. Further, we show jointly training on real and generated episodes can lead to significant improvements over training on real data alone.

Video/Code

3D Semantic MapNet: Building Maps for Multi-Object Re-Identification in 3D<